COMMUNITY PAGE

Run Wan 2.1 on Floyo

Home / Model / Wan 2.1 on Floyo

AI VIDEO GENERATION

Run Wan 2.1 on Floyo

#1 on VBench with 86.22%. Text-to-video, image-to-video, video editing, and text-to-image. The first video model with bilingual text rendering (Chinese + English). Apache 2.0 licensed.

Run Alibaba's Wan 2.1 through ComfyUI in your browser. No API key, no installs, no local GPU.

|

Parameters 14B / 1.3B |

VBench Score 86.22% (#1) |

|

Resolution 480p / 720p |

License Apache 2.0 |

| Try Wan 2.1 Now → | Browse All Models |

No installation. Runs in browser. Updated April 2026.

What you get?

What You Get

Wan 2.1 is Alibaba's foundational open-source video generation model, released February 2025. It ranks #1 on VBench with 86.22%, surpassing Sora (84.28%) and Luma (83.61%). Four model variants: T2V-14B, T2V-1.3B, I2V-14B-720P, and I2V-14B-480P. The 1.3B version runs on consumer GPUs with just 8.19GB VRAM. First video model to render bilingual text (Chinese + English). Over 5.4 million downloads across the Wan series. Available as ComfyUI nodes on Floyo.

WAN 2.1 WORKFLOWS ON FLOYO

What is Wan 2.1?

Wan 2.1 is Alibaba's open-source video generation model, released on February 22, 2025 under the Apache 2.0 license. It ranks #1 on VBench (86.22%), surpassing Sora (84.28%) and Luma (83.61%). The series includes four models: T2V-14B and T2V-1.3B for text-to-video, and I2V-14B at 720P and 480P for image-to-video. It is the first video model capable of rendering bilingual text (Chinese + English) in generated video.

At its core is a diffusion transformer enhanced with Wan-VAE, an advanced 3D causal variational autoencoder. Wan-VAE compresses video more efficiently than traditional VAEs while preserving temporal consistency. It supports 1080P video of unlimited length, runs 2.5x faster than HunYuanVideo's VAE on A800 GPUs, and serves as the shared foundation across the entire Wan model family (2.1, 2.2, 2.6, 2.7).

The 14B model is the quality tier. It excels at instruction adherence, complex motion generation, physical modeling, and text rendering. The 1.3B model is the accessibility tier. It runs on consumer-grade GPUs with just 8.19GB VRAM and generates a 5-second 480P video in about 4 minutes on an RTX 4090. Even at 1.3B parameters, it outperforms some larger open-source 5B models.

Wan 2.1 was the starting point for one of the most active open-source video generation ecosystems. Community contributions include CausVid speed LoRAs, VACE (Video All-in-one Creation and Editing), first/last frame control, GGUF quantized versions, and dozens of ComfyUI workflows. Over 5.4 million downloads on HuggingFace and ModelScope to date.

On Floyo, Wan 2.1 runs through native ComfyUI nodes on H100 NVL GPUs. Workflows cover vid2vid style transfer, text-to-image, InfiniteTalk (talking head generation), and vertical video FX insertion with Qwen VLM. No model downloads, no setup.

What are Wan 2.1's technical specifications?

Wan 2.1 uses a diffusion transformer architecture with the Wan-VAE (3D causal variational autoencoder). Two parameter sizes: 14B for maximum quality and 1.3B for consumer GPU accessibility. The 14B model supports text-to-video and image-to-video at 480P and 720P. The 1.3B model focuses on 480P but can generate 720P with reduced stability. Both share the Wan-VAE and support bilingual text rendering.

| Spec | Details |

|---|---|

| Developer | Alibaba (Tongyi/Wan AI) |

| Architecture | Diffusion Transformer + Wan-VAE (3D causal variational autoencoder) |

| T2V-14B | 14B parameters, text-to-video, 480P + 720P |

| T2V-1.3B | 1.3B parameters, text-to-video, best at 480P (720P less stable) |

| I2V-14B-720P | 14B parameters, image-to-video at 720P |

| I2V-14B-480P | 14B parameters, image-to-video at 480P |

| Duration | Up to 5 seconds per generation |

| VAE | Wan-VAE (supports unlimited-length 1080P, 2.5x faster than HunYuanVideo) |

| Text Rendering | Bilingual (Chinese + English) in generated video |

| VBench Score | 86.22% (#1, surpassing Sora 84.28% and Luma 83.61%) |

| Min VRAM (1.3B) | 8.19GB (consumer GPUs) |

| Speed (1.3B on RTX 4090) | ~4 minutes for a 5-second 480P video |

| Tasks | Text-to-video, image-to-video, video editing, text-to-image, video-to-audio |

| LoRA Support | Yes (CausVid speed LoRAs, style/character LoRAs) |

| License | Apache 2.0 (full commercial rights) |

| ComfyUI Access | Native support on Floyo (4+ workflows) |

| Release Date | February 22, 2025 |

What can you create with Wan 2.1?

Wan 2.1 covers text-to-video generation, image-to-video animation, vid2vid style transfer, text-to-image, talking head video (InfiniteTalk), vertical video FX insertion, and video editing. The Floyo workflows combine Wan 2.1 with Qwen VLM for intelligent video effects and support both landscape and vertical output formats.

| Capability | What It Does | Use Case |

|---|---|---|

| Text-to-Video | Generate 480P or 720P video from text prompts. Strong motion dynamics, physical modeling, and instruction adherence. | Short films, product demos, explainer videos, social content |

| Image-to-Video | Animate still images into video at 480P or 720P. Preserves the source image composition while adding natural motion. | Photo animation, product showcases, character turnarounds |

| Vid2Vid Style Transfer | Restyle existing video footage. Transform the visual style while preserving motion, structure, and timing. | Aesthetic adaptation, brand-specific looks, creative reimagining |

| InfiniteTalk | Generate talking head videos with lip-synced speech. Continuous generation for extended dialogue sequences. | Podcast visuals, presentation videos, avatar content |

| Vertical Video FX | Insert AI-generated visual effects into vertical video. Uses Qwen VLM for intelligent scene understanding and effect placement. | TikTok, Instagram Reels, YouTube Shorts, social ads |

| Text-to-Image | Generate images from text prompts using the same diffusion transformer. Shares quality characteristics with the video models. | Concept art, thumbnails, storyboard frames |

What are Wan 2.1's key features?

Wan 2.1's feature set centers on three things: benchmark-leading video quality, consumer GPU accessibility, and an ecosystem that grew into the most active open-source video generation community in 2025. The model's combination of quality, size options, and open licensing created a foundation that subsequent Wan versions (2.2, 2.6, 2.7) all build on.

#1 VBench Score

Wan 2.1 achieved 86.22% on VBench, the authoritative benchmark suite for video generation models. This surpassed Sora (84.28%), Luma (83.61%), and Pika. The score reflects strong performance in scene generation, motion smoothness, spatial accuracy, and instruction adherence.

Wan-VAE

The 3D causal variational autoencoder at the core of Wan 2.1 compresses video more efficiently than traditional VAEs while preserving temporal consistency. It supports unlimited-length 1080P video encoding and decoding, runs 2.5x faster than HunYuanVideo's VAE, and serves as the shared backbone for the entire Wan family.

Consumer GPU Support (1.3B)

The T2V-1.3B model requires just 8.19GB of VRAM. It runs on RTX 4060, RTX 3080, and similar consumer cards. Despite its small size, it outperforms some larger 5B open-source models and approaches closed-source quality. This made Wan 2.1 the entry point for creators who had never run local video generation before.

Bilingual Text Rendering

Wan 2.1 is the first video generation model that renders both Chinese and English text in generated video. Signs, captions, labels, and on-screen text appear legibly. This extends the model's practical applications to marketing, education, and international content production.

Massive Community Ecosystem

Over 5.4 million downloads. Community contributions include CausVid LoRAs (10x speed up with 3-step generation), VACE (Video All-in-one Creation and Editing), first/last frame control models, GGUF quantized versions, and hundreds of ComfyUI workflows. The Wan 2.1 ecosystem is the most active open-source video generation community.

Apache 2.0 License

Full commercial rights. Inference code, model weights, and all variants are open. The same license covers the entire Wan series. You can deploy, modify, fine-tune, train LoRAs, and build commercial products without restrictions.

How does Wan 2.1 compare to other video models?

Wan 2.1 holds #1 on VBench (86.22%) for overall video generation quality. Its main advantages are the Apache 2.0 license, 1.3B consumer GPU variant, and the largest open-source ecosystem. Wan 2.2 (its successor) adds MoE architecture for cleaner output. Wan 2.7 adds image generation and 4K. Kling and Sora offer higher resolution but are closed-source.

| Model | VBench | Resolution | Open Source | Consumer GPU |

|---|---|---|---|---|

| Wan 2.1 | 86.22% (#1) | 480p / 720p | Yes (Apache 2.0) | Yes (1.3B: 8.19GB) |

| Wan 2.2 | Higher (MoE) | 720p | Yes (Apache 2.0) | Yes (5B model) |

| Sora | 84.28% | 1080p | No | No |

| Luma | 83.61% | 1080p | No | No |

Source: VBench leaderboard, Alibaba Wan2.1 official documentation, HuggingFace model cards, and third-party benchmark comparisons as of April 2026.

How does Wan 2.1 work?

Wan 2.1 is a diffusion transformer that generates video by progressively denoising a latent representation. The Wan-VAE encodes video into a compressed 3D latent space, the diffusion transformer operates on that latent space to generate frames, and the VAE decodes the result back into pixel space. The architecture handles text and visual tokens in a unified framework.

The Wan-VAE is the core innovation. It is a 3D causal variational autoencoder that compresses video spatially and temporally while preserving frame-to-frame consistency. It supports encoding and decoding 1080P video of unlimited length without losing temporal information. On A800 GPUs, it reconstructs video 2.5x faster than HunYuanVideo's VAE.

For text-to-video, the model processes your text prompt through a language encoder, generates a noisy latent representation, and iteratively denoises it over multiple steps (typically 30-50 for full quality, or 3-6 with CausVid speed LoRAs). For image-to-video, the source image is encoded into the latent space as a conditioning signal that anchors the first frame.

On Floyo, Wan 2.1 runs through native ComfyUI nodes on H100 NVL GPUs. Model weights are pre-loaded. You can chain Wan 2.1 with other nodes: generate video, apply style transfer, add Qwen VLM-powered FX, upscale, and export. The vid2vid workflow restyling existing footage and the InfiniteTalk workflow for talking heads are pre-configured and ready to run.

Frequently Asked Questions

Common questions about running Wan 2.1 on Floyo.

You can start with Floyo's free pricing plan. To continue using the service beyond the free tier, upgrade your Floyo pricing plan. Wan 2.1 is open-source under Apache 2.0, so there is no additional API cost beyond your Floyo plan.

Open Floyo in your browser, search "Wan 2.1" in the template library, and pick a workflow. Click Run, write your prompt, and generate. Floyo handles the GPU, ComfyUI environment, and model weights. No local install, no Python setup.

Alibaba's Tongyi/Wan AI team. Wan 2.1 was released on February 22, 2025. It is the foundation model for the Wan series, which includes Wan 2.1-VACE (May 2025), Wan 2.2 (July 2025), Wan 2.6 (December 2025), and Wan 2.7 (April 2026). The full series has over 5.4 million downloads.

Wan 2.1 uses a standard diffusion transformer. Wan 2.2 introduced the Mixture-of-Experts (MoE) architecture that separates denoising into high-noise and low-noise expert models for cleaner cinematic output. Wan 2.2 also added a 5B hybrid model. Both are open-source under Apache 2.0. If you want the largest ecosystem of LoRAs and community workflows, Wan 2.1 has the edge. For cinematic quality, Wan 2.2 is the upgrade.

Yes. Wan 2.1 has the most mature LoRA ecosystem of any open-source video model. CausVid LoRAs reduce generation from 50 steps to 3 steps (about 10x speed boost). Style LoRAs, character LoRAs, and motion LoRAs are widely available on Civitai and HuggingFace. The Floyo workflow "Wan 2.1 Vid2Vid Style Transfer" uses LoRA integration.

Yes. Floyo runs ComfyUI, which lets you chain multiple models. The "Vertical Video FX Inserter" workflow combines Wan 2.1 with Qwen VLM for intelligent effect placement. You can also chain Wan 2.1 with Fish Audio S2 for narration, Nano Banana for image generation, or any other ComfyUI-compatible model.

Yes. Wan 2.1 is released under the Apache 2.0 license, which grants full commercial usage rights. You can use generated videos in products, marketing, client work, and any other commercial context without additional licensing.

Wan 2.7 is the latest in the series with image generation, thinking mode, 4K output, and more features. Wan 2.1 has the most mature ecosystem with the widest range of community LoRAs, workflows, and extensions. For maximum flexibility and community support, start with Wan 2.1. For the latest features and highest quality, try Wan 2.7. Both are available on Floyo and can be used in the same pipeline.

Try Wan 2.1 on Floyo

#1 on VBench. Text-to-video, vid2vid style transfer, InfiniteTalk, and vertical video FX. Open source under Apache 2.0. Run it in your browser.

| Try Wan 2.1 Now → | Browse All Models |

Related Reading

Film and Animation Workflows on Floyo

Vertical Video Production on Floyo

Last updated: April 2026. Specs from Alibaba Wan2.1 official documentation, HuggingFace model cards (Wan-AI/Wan2.1-T2V-14B), VBench leaderboard, ComfyUI Wiki, and Alibaba Cloud press releases.

animation

Ditto

lora

VACE

Video2Video

Wan

Upload any video, describe a new style, and Wan 2.1 rewrites every frame. Ditto keeps motion and structure intact across anime, Pixar, clay, and dozens more.

Wan 2.1 Vid2Vid Style Transfer with Ditto

Upload any video, describe a new style, and Wan 2.1 rewrites every frame. Ditto keeps motion and structure intact across anime, Pixar, clay, and dozens more.

fx-integration

image-to-image

qwen

reference-image

upscaling

video-conditioning

wan21-funcontrol

Vertical Video FX Inserter - Qwen + Wan 2.1 FunControl

text2image

Wan2.1

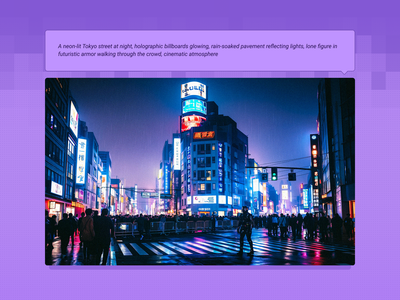

Created by @yanokusnir on Reddit, please support the original creator! https://www.reddit.com/r/StableDiffusion/comments/1lu7nxx/wan_21_txt2img_is_amazing/ If this is your workflow, please contact us at team@floyo.ai to claim it! Original post from the creator: Hello. This may not be news to some of you, but Wan 2.1 can generate beautiful cinematic images. I was wondering how Wan would work if I generated only one frame, so to use it as a txt2img model. I am honestly shocked by the results. All the attached images were generated in fullHD (1920x1080px) and on my RTX 4080 graphics card (16GB VRAM) it took about 42s per image. I used the GGUF model Q5_K_S, but I also tried Q3_K_S and the quality was still great. The only postprocessing I did was adding film grain. It adds the right vibe to the images and it wouldn't be as good without it. Last thing: For the first 5 images I used sampler euler with beta scheluder - the images are beautiful with vibrant colors. For the last three I used ddim_uniform as the scheluder and as you can see they are different, but I like the look even though it is not as striking. :) Enjoy.

Wan 2.1 Text2Image

Created by @yanokusnir on Reddit, please support the original creator! https://www.reddit.com/r/StableDiffusion/comments/1lu7nxx/wan_21_txt2img_is_amazing/ If this is your workflow, please contact us at team@floyo.ai to claim it! Original post from the creator: Hello. This may not be news to some of you, but Wan 2.1 can generate beautiful cinematic images. I was wondering how Wan would work if I generated only one frame, so to use it as a txt2img model. I am honestly shocked by the results. All the attached images were generated in fullHD (1920x1080px) and on my RTX 4080 graphics card (16GB VRAM) it took about 42s per image. I used the GGUF model Q5_K_S, but I also tried Q3_K_S and the quality was still great. The only postprocessing I did was adding film grain. It adds the right vibe to the images and it wouldn't be as good without it. Last thing: For the first 5 images I used sampler euler with beta scheluder - the images are beautiful with vibrant colors. For the last three I used ddim_uniform as the scheluder and as you can see they are different, but I like the look even though it is not as striking. :) Enjoy.

nikhil07

116

animation

image to video

lipsync

vid2vid

video generation

wan

Wan 2.1 InfiniteTalk talking video from audio and a reference clip

Wan 2.1 InfiniteTalk

Wan 2.1 InfiniteTalk talking video from audio and a reference clip

%20(1)_1774207669060.webp?width=400&height=300&quality=80&resize=cover)